TL;DR:

- Coordination tax increases with enterprise scale, causing hidden productivity losses.

- Clear roles, governance, and security assessments are essential before implementing AI-driven collaboration tools.

- Continuous iteration, active AI engagement, and leadership modeling sustain long-term collaboration improvements.

Productivity stalls are not always caused by a lack of effort. In many medium and large enterprises, coordination tax drains productivity as teams scale, eating into hours that should go toward real output. Unclear roles, fragmented messaging tools, and manual handoffs create bottlenecks that compound quietly over months. The good news is that a structured, security-aware approach using AI-driven tools and proven process frameworks can cut through the noise, restore accountability, and produce measurable gains. This guide walks you through every stage.

Table of Contents

- Laying the groundwork: prerequisites for collaboration optimization

- Step-by-step: optimizing your collaboration process using AI and hybrid frameworks

- Troubleshooting and avoiding common pitfalls in collaboration optimization

- Verifying results: measuring and sustaining collaboration improvements

- A practitioner's perspective: Why sustainable collaboration depends on culture and iteration

- Accelerate collaboration optimization with secure, enterprise-ready solutions

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Foundation matters | Clear roles and governance are essential before process optimization can succeed. |

| Hybrid & AI boost results | Combining AI and hybrid management frameworks leads to faster, more measurable gains. |

| Avoid passive AI use | Active engagement with AI preserves team motivation and long-term results. |

| Measure, iterate, improve | Use meaningful KPIs and continuous feedback to ensure sustained collaboration success. |

Laying the groundwork: prerequisites for collaboration optimization

Before you change any tool or process, you need a clear picture of what is actually broken. Many IT teams make the mistake of jumping straight to tool selection without addressing the structural gaps underneath. That approach almost always leads to the same problems surfacing in a new environment.

Start with role clarity. The RACI matrix (Responsible, Accountable, Consulted, Informed) is one of the most effective frameworks for making team coordination transparent. Without it, overlapping responsibilities cause confusion, delayed decisions, and unnecessary meetings. When every team member knows exactly who owns each task and who just needs to be informed, communication volume drops and decision speed increases.

Key methodologies for collaboration process optimization include defining clear roles and RACI matrices, establishing governance forums with explicit decision rights, using the DMAIC framework (Define, Measure, Analyze, Improve, Control) for cross-functional IT projects, standardizing shared language and process maps, and combining upfront planning with iterative execution through hybrid project management. These are not soft suggestions. They are structural prerequisites.

Governance matters as much as process. A governance forum with explicitly defined decision rights prevents ambiguity at escalation points. Without it, teams either over-escalate small decisions or make consequential calls without proper oversight.

Security posture comes next. Before selecting any top AI collaboration tools, you need a baseline assessment of your current security controls. Enterprise messaging platforms handle sensitive project data, personnel information, and sometimes regulated records. Choosing a tool without evaluating its encryption standards, access controls, and compliance certifications is a significant risk.

Readiness checklist

Before moving to implementation, confirm the following are in place:

- RACI matrix drafted and reviewed by all team leads

- Governance forum established with documented decision rights

- Shared glossary created for cross-functional teams

- Current processes mapped (swimlane diagrams work well here)

- Security requirements documented (encryption, access tiers, compliance needs)

- Executive sponsor identified for change management

- Baseline KPIs recorded for future benchmarking

| Prerequisite | Why it matters | Owner |

|---|---|---|

| RACI matrix | Eliminates role overlap and confusion | Department heads |

| Governance forum | Speeds up decisions, reduces escalations | IT leadership |

| Security baseline | Guides tool selection and risk management | CISO or IT security |

| Shared language | Reduces miscommunication across functions | Project managers |

| Process maps | Reveals bottlenecks before they worsen | Business analysts |

Pro Tip: Run a brief "collaboration audit" with your team leads before any optimization initiative. Ask three questions: Where do decisions stall? Where do messages get lost? Where do handoffs fail? The answers almost always reveal the same two or three systemic gaps.

A solid guide to secure team collaboration can help you structure this audit effectively and ensure security requirements are integrated from the start, not bolted on later.

Step-by-step: optimizing your collaboration process using AI and hybrid frameworks

With a solid foundation in place, you can follow a proven process to optimize how your teams communicate and coordinate. The key is phasing your rollout so that each step builds on the previous one, reducing disruption while building momentum.

Step 1: Review and finalize your RACI. This is not a one-time exercise. Pull the matrix into your collaboration platform so it stays visible and current. Stale role definitions are nearly as harmful as having none at all.

Step 2: Select your tools based on security and fit. Not every enterprise messaging platform is built to the same standard. Evaluate options on encryption (at rest and in transit), compliance certifications (SOC 2, ISO 27001), data residency options, and AI feature quality. The platform should fit your workflow, not force you to redesign it.

Step 3: Run a controlled pilot. Choose one cross-functional team or project for your initial deployment. Measure baseline metrics before launch: meeting frequency, decision cycle time, and message response time. Let the pilot run for four to six weeks before drawing conclusions.

Step 4: Train actively, not passively. A platform walkthrough is not enough. Train teams on how to use AI features actively, such as generating summaries, using voice huddles, and triggering real-time translation for multilingual calls. Passive tool adoption produces passive results.

Step 5: Scale with security checkpoints. Before expanding to additional teams or regions, run a security review. Confirm access controls are working as intended, audit logs are clean, and data handling policies are being followed. Phased rollouts with security assurance enable scaling secure AI tools in large enterprises. Amgen scaled a similar initiative to 20,000 users by following this exact phased, security-first model.

Step 6: Optimize continuously. Collect feedback from team leads and individual contributors after each phase. Adjust workflows, update the RACI, and refine AI configurations based on real usage patterns. This is where hybrid methodology earns its value: structured enough to stay on track, adaptive enough to correct course.

The evidence behind this approach is compelling. AI and human synergy improves performance by 23 to 29 percentage points using models like GPT-4o and Llama. In engineering contexts, teams saw 44% faster mean time to merge code and 39% less meeting time. Those numbers come from structured, active deployment, not passive tool adoption.

| Approach | Decision speed | Meeting load | Security control | Adaptability |

|---|---|---|---|---|

| Traditional (manual) | Slow | High | Variable | Low |

| AI-optimized (hybrid) | Fast | Reduced by ~39% | Enforced by design | High |

| Passive AI use | Moderate | Moderate | Inconsistent | Low |

For a detailed look at how to structure each phase, the AI-powered collaboration steps framework provides a practical sequence you can adapt to your organization's size and complexity.

Pro Tip: When piloting, assign a "process observer" to each team. This person is not a manager but a peer who tracks where the new workflow causes confusion. Their input is more candid and often more actionable than formal survey data.

If you are evaluating which platforms to include in your shortlist, the comparison of AI tools for collaboration breaks down the criteria that matter most for enterprise deployments.

Troubleshooting and avoiding common pitfalls in collaboration optimization

Even with best practices, teams encounter real obstacles. Most of them are predictable. Knowing what to watch for before you hit a wall is far more valuable than troubleshooting after damage is done.

The coordination tax problem. As organizations scale, the overhead of coordinating across teams, time zones, and functions begins to consume a significant share of productive capacity. This is coordination tax: the hidden cost of keeping everyone aligned. It does not announce itself. It shows up as recurring status meetings, redundant message threads, and slow approvals. Left unchecked, it compounds.

Coordination tax drains productivity in scaling enterprises, while passive AI use reduces self-efficacy and motivation post-transition, and active collaboration preserves them. This is a critical distinction. Teams that use AI passively (letting the tool summarize conversations they never read, or auto-assigning tasks they do not understand) lose engagement over time. Teams that use AI actively, as a collaborator in their process, retain motivation and improve output quality.

The accountability gap. AI tools accelerate individual tasks with impressive efficiency. They do not, on their own, resolve accountability or communication issues within teams. If no one owns a decision, an AI assistant will not fix that. Governance structures and clear role definitions are still the only reliable solution.

"Prioritize active human-AI collaboration over passive reliance to maintain worker motivation and long-term efficacy; implement governance and shared language early to handle cross-functional edge cases."

Common mistakes to avoid:

- Deploying a new platform without updating the RACI or governance structure first

- Skipping the pilot phase and rolling out to the entire organization at once

- Treating AI-generated summaries as a substitute for actual engagement in discussions

- Selecting tools based on features alone without evaluating security certifications

- Ignoring multilingual teams during tool selection, which creates uneven adoption

- Failing to set and communicate clear data retention and access policies before launch

- Over-automating workflows to the point where team members disengage from outcomes

One more pitfall that gets less attention: misaligned incentives. If team performance is measured individually while collaboration is the stated priority, people will default to individual behavior. Measurement systems must reflect the collaboration goals you are trying to achieve.

Pro Tip: Set a "collaboration health check" as a standing agenda item in your monthly leadership meeting. Review three metrics: message resolution time, meeting-to-decision ratio, and escalation frequency. Trends in these numbers tell you more about team health than any employee survey.

For practical guidance on keeping AI assistance working for your team rather than around it, the boost productivity with AI assistants resource covers specific configuration and engagement strategies.

Verifying results: measuring and sustaining collaboration improvements

After implementation, the real test is whether gains hold over time. Many organizations see an initial burst of improvement followed by a slow slide back to old habits. Preventing that slide requires deliberate measurement and a feedback loop that drives continuous correction.

Use domain-specific KPIs, not generic ones. Measure success with domain-specific KPIs like cycle time rather than just process adherence. Hybrid methodologies balance control and adaptability for IT projects. For software delivery teams, mean time to merge is a sharper signal than team satisfaction scores. For project management teams, decision cycle time tells you more than meeting frequency alone.

Key metrics to track:

- Cycle time for key deliverables (per sprint, per project phase)

- Mean time to merge for engineering and development teams

- Meeting-to-decision ratio (how many meetings produce a concrete decision)

- Message response time within critical channels

- Escalation rate (how often issues bypass the defined decision owner)

- Tool adoption rate across departments and regions

- AI feature engagement (are summaries, huddles, and translation being used actively?)

| Metric | What it signals | Benchmark target |

|---|---|---|

| Cycle time | Workflow efficiency | Reduce by 20% in 90 days |

| Mean time to merge | Engineering coordination | 44% improvement is achievable |

| Meeting-to-decision ratio | Meeting quality | Less than 3 meetings per decision |

| Escalation rate | Governance effectiveness | Declining trend month over month |

| AI feature engagement | Active adoption quality | Above 70% of eligible users |

Build in course correction. Review your KPIs monthly for the first six months. Do not wait for a quarterly business review to catch a trend. AI boosts immediate performance but gains do not persist in solo tasks, and culture shifts toward efficiency while challenges remain. This confirms that measurement must be tied to team behavior, not just tool output.

AI summaries for productivity are a good example of a feature that shows strong early gains but requires reinforcement through team norms to sustain them. If leadership stops modeling active use, adoption fades.

A practitioner's perspective: Why sustainable collaboration depends on culture and iteration

Here is what the benchmarks and frameworks do not tell you: technology adoption is a leadership problem more than a technical one.

We have seen organizations deploy impressive AI-powered platforms with every security certification in place, only to watch adoption plateau within three months. The tools worked. The culture did not shift. Team leads defaulted to existing habits, AI features went unused, and the RACI matrix lived in a folder nobody opened.

AI excels in individual acceleration but requires human oversight for team coordination. That sentence should be on the wall of every IT leadership meeting room. The tooling accelerates what humans already do well. It does not create alignment where alignment does not exist.

Sustainable collaboration improvements require two things that no platform can install: a culture that values iteration over perfection, and leaders who model the behaviors they want to see. If your project managers are still running status-only meetings after a collaboration optimization initiative, the initiative has not reached the culture yet.

Iteration also means being willing to revisit decisions you were confident in. The RACI you drafted in month one will need updating by month four. The governance structure that worked for 50 users may create new bottlenecks at 500. Building in a scheduled review cadence is not an admission of failure; it is how serious organizations sustain performance.

The teams that get this right treat collaboration optimization as an ongoing practice, not a project with a delivery date. They use platforms like AI-enhanced team communication as living infrastructure, regularly adapting configurations, retraining staff, and updating their metrics. That iterative discipline is what separates a six-month productivity bump from a lasting operational shift.

Accelerate collaboration optimization with secure, enterprise-ready solutions

Putting this guide into practice requires the right platform underneath your process. Choosing a messaging tool that lacks bank-grade security, AI-native features, or multilingual support will create new constraints just as you resolve old ones.

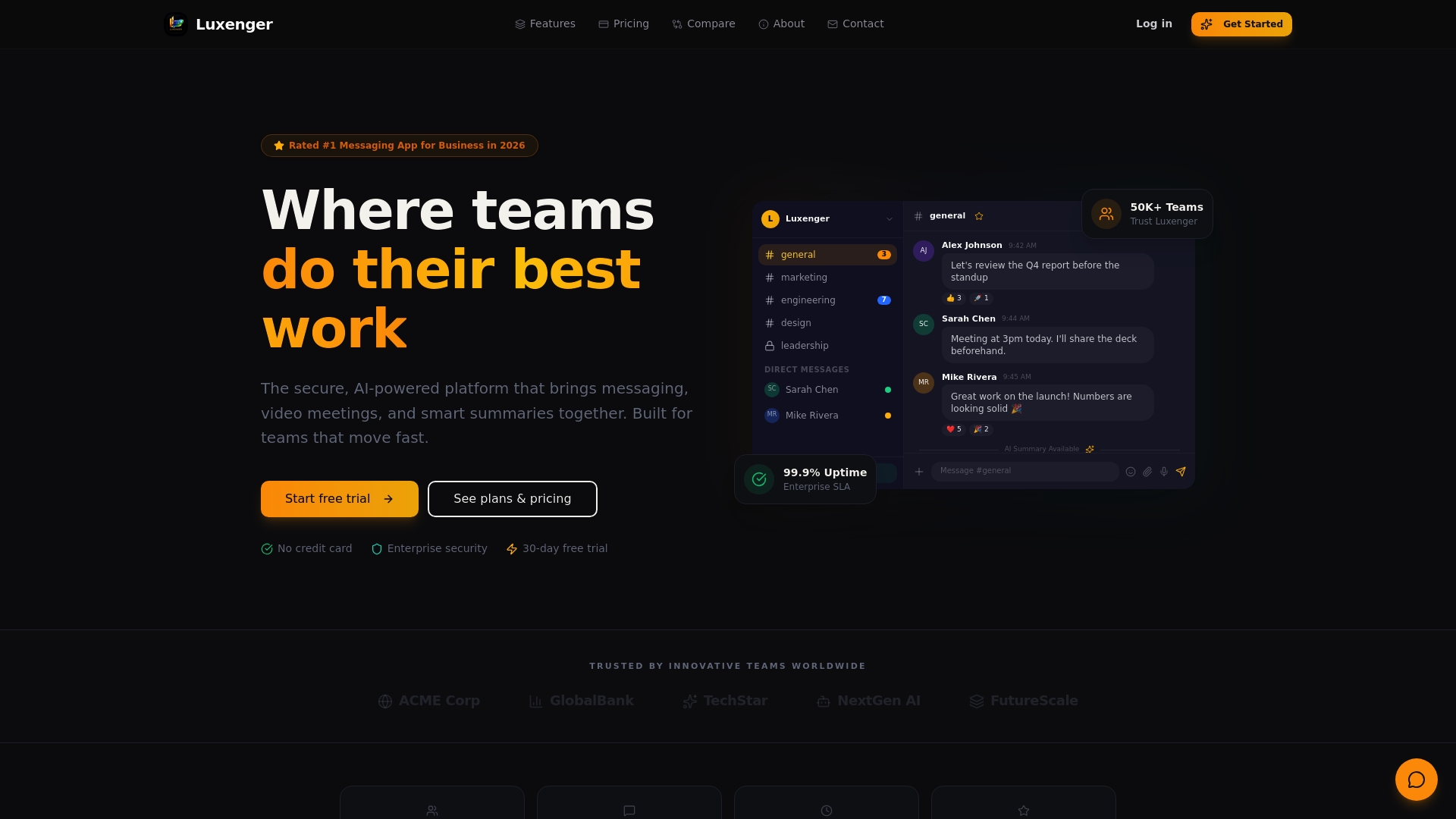

Luxenger is built specifically for the kind of secure, AI-driven collaboration this guide describes. With AI-powered conversation summaries, voice huddles for rapid alignment, and real-time translation across languages, it supports every step of the optimization process at enterprise scale. The platform meets the security standards your CISO requires and the usability standards your teams will actually adopt.

Explore secure enterprise collaboration options tailored to your industry and scale. Whether you are standardizing communication across global teams or deploying a phased rollout similar to Amgen's model, the Luxenger platform is designed to support it from day one. Review the enterprise pricing to find the plan that fits your organization's scope and growth trajectory.

Frequently asked questions

What is the RACI matrix and why is it crucial for collaboration?

A RACI matrix clarifies who is Responsible, Accountable, Consulted, and Informed for each task, preventing role overlap and making team coordination transparent and efficient. Without it, decision ambiguity and redundant communication create compounding bottlenecks.

Does AI alone eliminate collaboration bottlenecks in IT teams?

No. AI accelerates individual tasks but does not resolve accountability or communication issues on its own. Governance structures, role clarity, and active engagement remain essential.

What KPIs should IT leaders track after optimization?

Focus on domain-specific metrics like cycle time, mean time to merge, and meeting-to-decision ratio. These signals reflect real workflow performance rather than surface-level tool activity.

How can we prevent passive use of AI tools?

Active human-AI collaboration preserves worker motivation and long-term efficacy better than passive reliance does. Design training programs and team norms that require active engagement with AI features, not just passive consumption of outputs.

What is the main cause of productivity loss when scaling collaboration?

Coordination tax is the primary driver of productivity loss in large, scaling enterprises. It accumulates silently through redundant meetings, slow approvals, and misaligned communication channels.